Your roadmap to mastering coding essentials and beyond.

Universal Approximation Theorem Explained: Why Neural Networks Can Approximate Any Continuous Function

The Universal Approximation Theorem (UAT) gets quoted constantly, but it is usually described in a fuzzier way than it deserves. It does not say neural networks are magically good at every task. It does not say a shallow network is the most practical architecture. It does not say gradient descent will easily find the right weights. What it does say is still important: With a suitable nonlinear activation and enough hidden units, a feedforward network can approximate any continuous function on a bounded domain as closely as we want. ...

Source Maps Explained: How They Work and Why They Sometimes Leak Source Code

Most developers only think about source maps when DevTools magically shows the original TypeScript instead of unreadable bundled JavaScript. That convenience hides an important fact: A source map is not just “debug metadata.” It is a translation table between generated code and original source code. And depending on how it is emitted, it can contain the original source itself. That is why source maps sit at the intersection of: debugging build tooling browser DevTools error reporting systems like Sentry security and accidental code exposure If you have ever wondered how a minified file can still produce readable stack traces, or how a published .map file can expose a package’s real TypeScript source, this is the mental model you want. ...

How Adblock Extensions Work and How to Customize Their Behavior

When people think about adblock extensions, they usually imagine something simple: “The extension sees an ad and hides it.” That is only part of the story. Tools like uBlock Origin are better understood as content blockers, not just ad blockers. They do block ads, but they also block: trackers popups malware domains anti-blocker scripts other unwanted page behavior Modern blockers such as uBlock Origin mostly work by applying rules to: ...

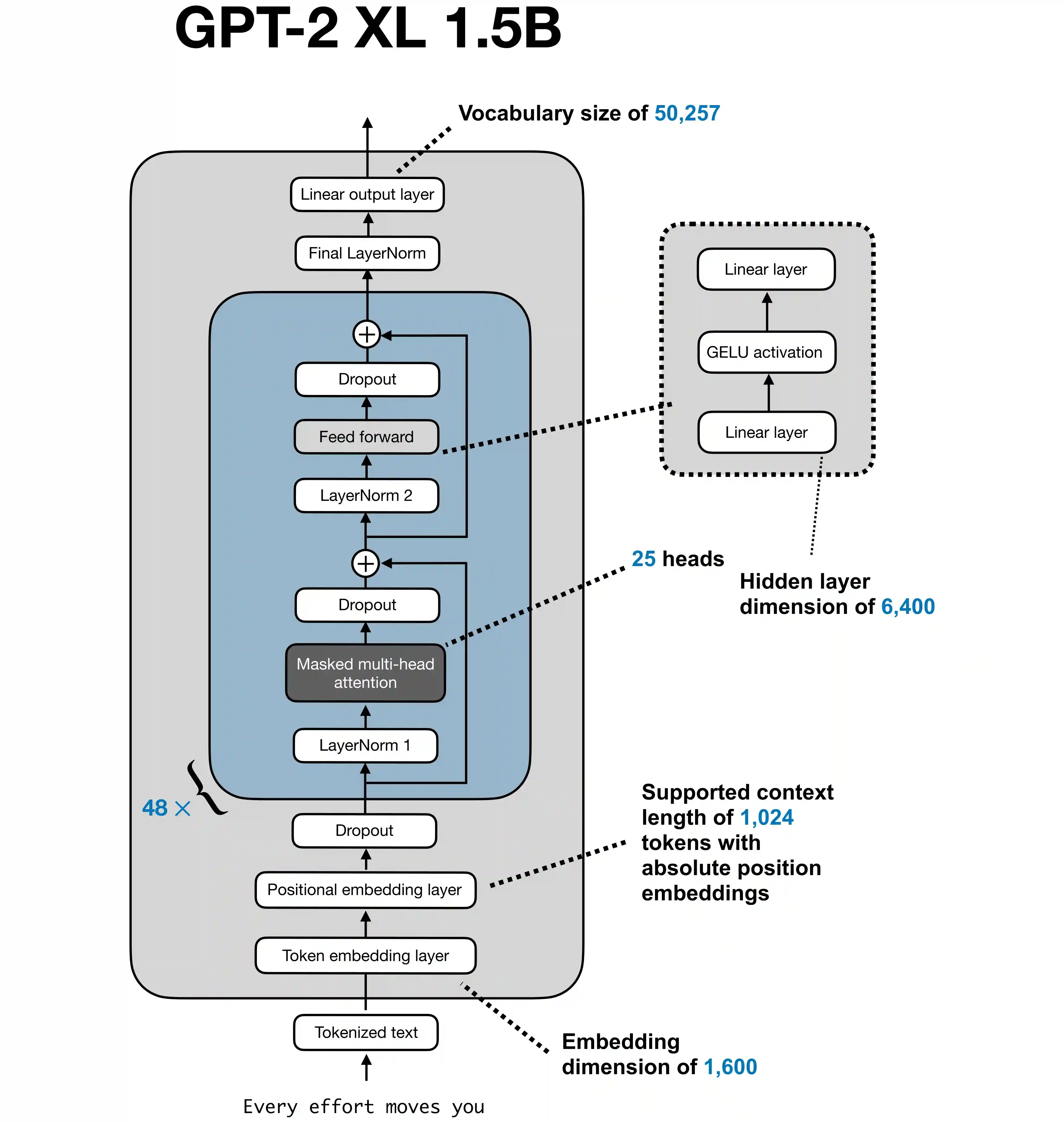

Understanding LLM Architecture: Layers, Transformer Blocks, and Attention Heads

Large Language Models (LLMs) such as GPT-2, GPT-3, LLaMA, and BERT are built on top of the Transformer architecture. That architecture changed natural language processing by replacing recurrence with attention, which lets models process sequences more efficiently and capture long-range relationships more directly. If you are trying to understand what terms like layer, transformer block, and attention head actually mean, the easiest way is to follow the path a sentence takes through a GPT-style model. ...

How Much Do LLMs Hallucinate in Document Q&A? Key Lessons from a 172B-Token Study

If you are building a RAG system, internal knowledge assistant, or document search chatbot, one question matters more than almost anything else: When the answer is supposed to come from the provided documents, how often does the model still make things up? That is exactly what the March 9, 2026 paper “How Much Do LLMs Hallucinate in Document Q&A Scenarios? A 172-Billion-Token Study Across Temperatures, Context Lengths, and Hardware Platforms” tries to measure. ...

CAP Theorem Explained: Consistency vs Availability in Distributed Systems

The CAP theorem is one of the most important ideas in distributed systems because it explains why “just make it always correct and always online” is not a realistic requirement once multiple nodes and unreliable networks enter the picture. In simple terms, CAP says that when a network partition happens, a distributed system can prioritize either: Consistency Availability But not both at the same time. Partition tolerance is not a feature you casually add or remove. If your system runs across multiple machines, partitions are a fact of life, so the real design choice is usually CP vs AP. ...

Attention Mechanisms Explained: Self-Attention, Cross-Attention, Sparse Attention, MQA, GQA, and DeepSeek MLA

Attention is the idea that made modern transformers practical and powerful. Instead of compressing an entire input into one fixed vector, a model can decide, token by token, which earlier pieces of information matter most right now. That sounds simple, but there are many different kinds of attention mechanisms, and they exist because models face different constraints: some need strong alignment between an encoder and a decoder some need to generate text one token at a time without looking ahead some need to handle very long documents some need to reduce GPU memory traffic at inference time This article walks through the main families of attention, shows where they fit, and explains why newer variants such as DeepSeek’s multi-head latent attention (MLA) matter. ...

How to Search AWS CloudWatch Logs Effectively

When people say they want to “search in CloudWatch”, what they usually need is CloudWatch Logs Insights. It is much more useful than manually opening individual log streams because you can search across log groups, combine conditions, sort by timestamp, and limit results quickly. That said, AWS also has the basic log search interface inside a log group. If you select all streams and search there, the syntax is different. It uses filter patterns, not Logs Insights query language. ...

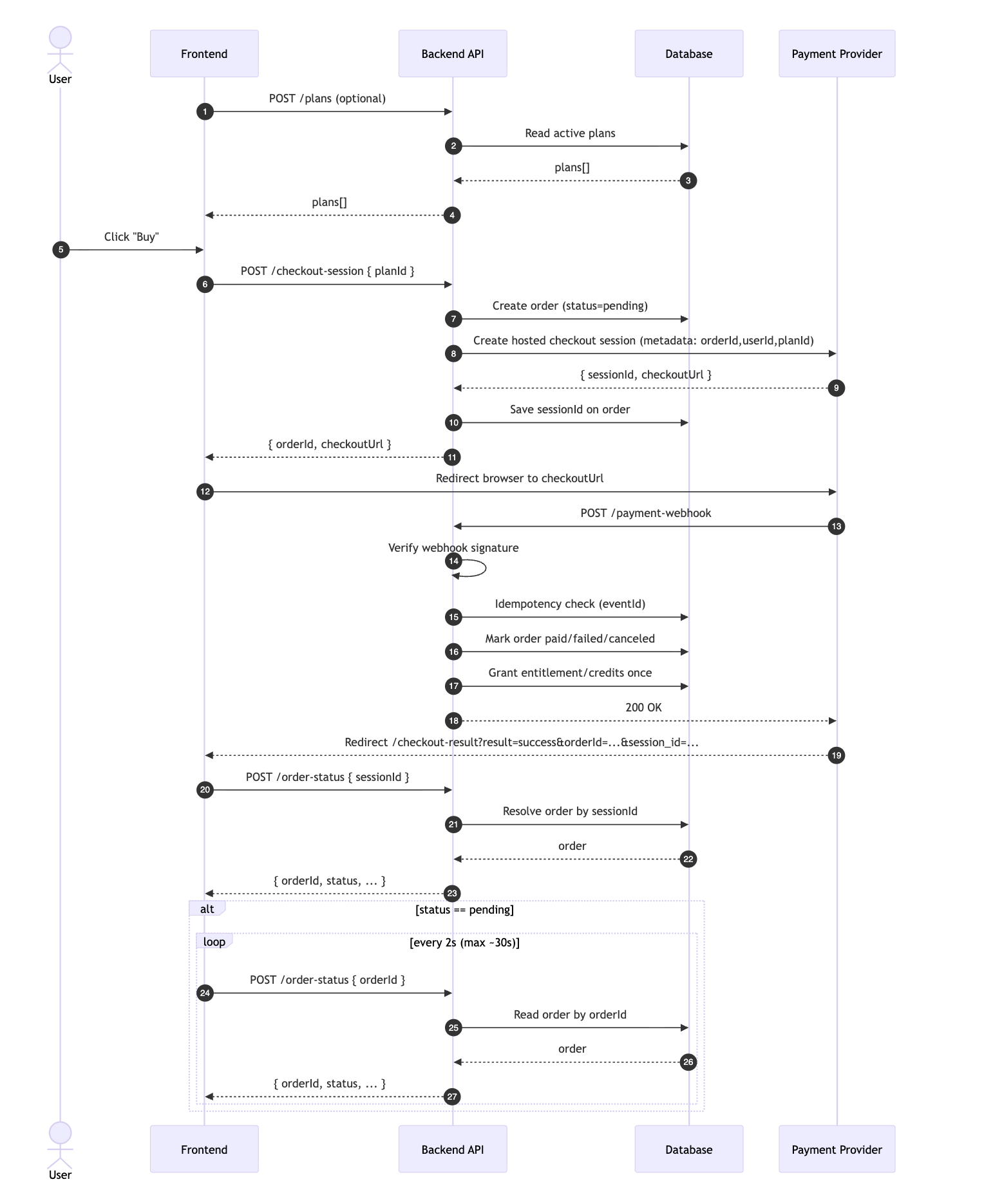

Integrating Stripe Payment with Checkout Flow (Webhooks + Polling)

If you use a hosted payment page like Stripe Checkout, the safest architecture is: Create an internal order before redirecting. Redirect the user to the hosted checkout URL. Use webhooks as the source of truth for fulfillment. Let the frontend poll order status after return. This avoids race conditions and ensures users still get entitlements even if they close the tab before the success page loads. Why This Pattern Works Hosted checkout redirects are excellent for UX and compliance, but redirects are not guaranteed delivery signals. ...

How We Added a Developer Tools Section in Hugo (Client-Side Only)

We recently added a dedicated Developer Tools section to Learn Code Camp and shipped multiple utility tools in one go. The goal was simple: Client-side only. Your data stays in your browser on this page. That requirement shaped every implementation choice. What We Added We added a new /tools section with these live tools: JSON Formatter + Validator Base64 Encode/Decode URL Encode/Decode UUID Generator (v4) Unix Timestamp Converter JWT Decoder Regex Tester Text Diff Checker Hash Generator (SHA-256, MD5) Why Client-Side Only? For utility tools, people often paste sensitive payloads: tokens, configs, logs, API responses, and JSON with private fields. ...